Once upon a time, making the top three results in Google search results was the Holy Grail of technology marketing. But those relatively simpler times may soon be a thing of the past, though. Generative artificial intelligence is fundamentally shifting how enterprise technology buying decisions are made, and marketers need to prepare to respond.

Recent Foundry studies have shown that AI is rapidly becoming the primary interface for buyers evaluating tech purchases. This has implications not only for buyer behavior, but also for how vendors position, surface, and validate their offerings.

The good news is that the transition isn’t taking place overnight. AI platforms accounted for just .15% of global internet traffic in 2025, compared to 48.5% from organic search, according to SE Ranking. But AI traffic is growing fast and could pull even with search engines by 2029, according to some estimates.

The reason is simple: Recommendations from AI engines convert at a four to five times higher rate than search results, according to Rank Science.

Top of the funnel

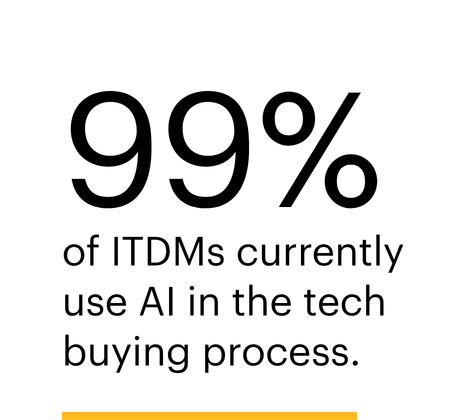

Foundry research shows that AI has inserted itself at the very top of the discovery funnel. The 2026 AI Priorities study found that 99% of IT decision-makers currently use AI in the tech buying process, with the top use cases being to compare solutions and features (48%) and define technical requirements (47%).

This shift has come at the expense of more traditional comparative tools such as analyst reports, peer networks, and events. For example, the use of product demos and pilot testing in evaluation dropped by half from 66% to 33% in just one year. While still important, the role of established evaluation channels is shifting later in the buying process.

These results point to a bigger trend: reduced time devoted to purchasing research. Historically, enterprise technology buying followed a staged progression, such as initial awareness through search or events, shortlist development supported by analyst and peer input, and validation through demos and proofs of concept.

AI collapses much of that sequence into a single interaction. Large language models excel at synthesizing data from multiple sources into a single recommendation. Evaluation sources such as product reviews, blog posts, and social network comments are consolidated and delivered without buyers having to consult each source.

Instead of navigating a fragmented information landscape, buyers are delegating synthesis to AI systems that deliver a single narrative. Vendor consideration sets are being shaped earlier and with less direct vendor interaction.

For marketers, the first impression increasingly happens inside an AI-generated summary rather than on a website, event, or in a sales conversation.

Read the full AI Priorities executive summary

Trust factor

Fortunately, survey results indicate buyers aren’t inclined to take AI recommendations at face value, at least not yet. When an LLM produces a recommendation or shortlist, buyers triangulate with other sources. The AI Priorities study found that 53% cross-check with analyst or editorial content, 47% visit vendor websites, and 43% consult peers. Those figures are consistent with pre-AI behaviors. Traditional sources haven’t disappeared; they’ve become a confirmation step rather than a primary discovery mechanism.

Notably, more than half of respondents to the AI Priorities study said they place only “moderate trust” in LLM outputs, while about one-third express high trust. The technology is moving quickly, however, and improvements in LLM output are likely to boost trust scores over time.

For vendors, the new priorities are to achieve visibility into AI outputs and consistency across the external sources buyers use to verify them.

Visibility in AI engines depends on how well a vendor’s information is represented across the range of sources that AI systems ingest and synthesize. While traditional techniques for achieving search visibility, such as optimizing for indexed pages and keyword queries, are still valid, AI optimization differs in several key ways.

AI outputs are less forgiving. Instead of presenting a list of results, they provide a single synthesized answer unless asked otherwise. Vendors that don’t appear in that result are effectively invisible.

Search engines match queries to keywords and backlinks. LLMs interpret intent and generate answers based on semantic relationships. This means vendor-generated content must clearly articulate use cases, differentiation, and outcomes in natural language, not just targeted keywords.

AI models draw from a wide range of sources, including analyst reports, editorial coverage, reviews, and community discussions. Credibility is determined by consistency across the ecosystem, not just messaging on a vendor’s website. This makes third-party sources a more critical validation element. Bylined articles, videotaped interviews, and positive media coverage are more important than ever. The objective shifts from attracting traffic to influencing how the category and vendor are described within the answer to a prompt.

There’s good news in the research for new companies, in particular. The AI Priorities study found that 65% of organizations now have dedicated AI budgets, up from 36% just two years ago. It also found that three-quarters of organizations expect to add new vendors to their portfolios. With many new entrants coming on the market, this suggests that the vendor landscape is becoming more dynamic and fluid, offering greater opportunities for new companies to gain visibility.

Here’s what marketers can do to boost their AI visibility in the short term.

- Structure content for comprehension, not just indexing. Clearly articulate capabilities, use cases, and differentiation points to increase the likelihood that AI systems will understand and accurately portray them.

- Create structured, machine-readable content using tools such as schema markup and product definitions. Map relationships between the company, product, and use case. FAQs are an excellent tool.

- Double down on third-party validation. Analyst coverage, independent reviews, and editorial mentions are not just credibility signals for humans but also source material for AI synthesis and later validation.

- Use consistent naming across channels and encourage partners and influencers to do the same. Discrepancies between vendor messaging and external descriptions can reduce the chance of inclusion in AI-generated summaries.

- Promote experts. AI engines look for proof that the people who build products have the skills to solve the problems customers ask about.

Think of AI engines as proxies for human researchers. Technical factors like metatags, keyword frequency, and keyword proximity are less important than demonstrated expertise and third-party validation.

Visibility is no longer just about being found. It’s about being understood.